Kubernetes集群扩容:增加node节点

先统一hosts:

192.168.2.11 node01

192.168.2.12 node02

192.168.2.13 node03

192.168.2.144 db

先升级kubeadm

先用下代理,以免访问yum源超时:

export http_proxy=’http://192.168.2.2:1080′

export https_proxy=’http://192.168.2.2:1080′

export ftp_proxy=’http://192.168.2.2:1080′

在各节点上都升级下kubelet kubeadm kubectl:

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

exclude=kube*

EOF

# Set SELinux in permissive mode (effectively disabling it)

setenforce 0

sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

systemctl enable --now kubelet

#国内的源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

在kubeadm init初始化操作完成时,系统最后给出了将节点加入集群的命令:

kubeadm join 10.0.0.39:6443 --token 4g0p8w.w5p29ukwvitim2ti

--discovery-token-ca-cert-hash sha256:21d0adbfcb409dca97e655641573b2ee51c

77a212f194e20a307cb459e5f77c8

其中包含了节点入编集群所需要携带的验证token,以防止外部恶意的节点进入集群。

每个token自生成起24小时后过期。

后期如果需要加入新的节点,则需要重新生成新的join token,请使用下面的命令生成,注意改写IP

# master-1:生成指向VIP的Join Command

kubeadm token create --print-join-command|sed 's/${LOCAL_IP}/${VIP}/g'

#例如:

##其中10是虚拟ip,11是master01的ip

[root@node01 ~]# kubeadm token create --print-join-command|sed 's/192.168.2.11/192.168.2.10/g'

kubeadm join 192.168.2.10:6443 --token xa4yyj.kd08o93audz1u8u3 --discovery-token-ca-cert-hash sha256:a927f92dd4c279c54944fea27f602dc75731cb53093d6c330e9e238556e1e93d

在新节点上执行:

[root@db ~]# kubeadm join 192.168.2.10:6443 --token xa4yyj.kd08o93audz1u8u3 --discovery-token-ca-cert-hash sha256:a927f92dd4c279c54944fea27f602dc75731cb53093d6c330e9e238556e1e93d

[preflight] Running pre-flight checks

[WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service'

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 18.09.1. Latest validated version: 18.06

[discovery] Trying to connect to API Server "192.168.2.10:6443"

[discovery] Created cluster-info discovery client, requesting info from "https://192.168.2.10:6443"

[discovery] Requesting info from "https://192.168.2.10:6443" again to validate TLS against the pinned public key

[discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.2.10:6443"

[discovery] Successfully established connection with API Server "192.168.2.10:6443"

[join] Reading configuration from the cluster...

[join] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet] Downloading configuration for the kubelet from the "kubelet-config-1.13" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Activating the kubelet service

[tlsbootstrap] Waiting for the kubelet to perform the TLS Bootstrap...

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "db" as an annotation

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the master to see this node join the cluster.

在master上查看node状态:

[root@node01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

db NotReady <none> 74s v1.13.4

node01 Ready master 38d v1.13.3

node02 Ready master 38d v1.13.3

node03 Ready master 38d v1.13.3

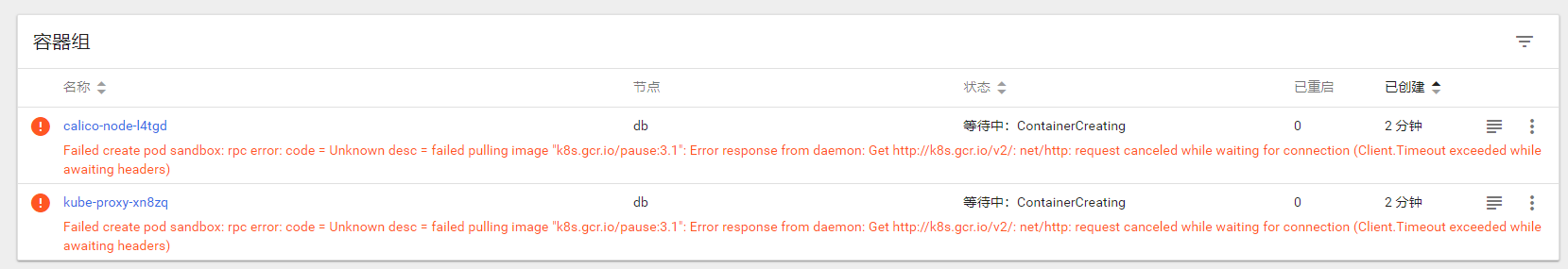

异常了,发现镜像有问题:

参考13.3的升级文档,在新节点上拉一下镜像,重启下docker服务即可:

新节点暂时不做调度:

kubectl taint nodes db forward=node-30:NoSchedule